I’m not going to lie: Conducting an in-depth SEO audit is a major deal.

And, as an SEO consultant, there are a few sweeter words than, “Your audit looks great! When can we bring you onboard?”

Even if you haven’t been actively looking for a new gig, knowing your SEO audit nailed it is a huge ego boost.

But, are you terrified to start? Is this your first SEO audit? Or, you just don’t know where to begin? Sending a fantastic SEO audit to a potential client puts you in the best possible place.

It’s a rare opportunity for you to organize your processes and rid your potential client of bad habits (cough*unpublishing pages without a 301 redirect*cough) and crust that accumulates like the lint in your dryer.

So take your time. Remember: Your primary goal is to add value to your customer with your site recommendations for both the short-term and the long-term.

Ahead, I’ve put together the need-to-know steps for conducting an SEO audit and a little insight to the first phase of my processes when I first get a new client. It’s broken down into sections below. If you feel like you have a good grasp on a particular section, feel free to jump to the next.

This is a series, so stay tuned for more SEO audit love. 💖

When Should I Perform an SEO Audit?

After a potential client sends me an email expressing interest in working together and they answer my survey, we set-up an intro call (Skype or Google Hangouts is preferred).

Before the call, I do my own mini quick SEO audit (I invest at least one hour to manually researching) based on their survey answers to become familiar with their market landscape. It’s like dating someone you’ve never met.

You’re obviously going to stalk them on Facebook, Twitter, Instagram, and all other channels that are public #soIcreep.

Here’s an example of what my survey looks like:

Here are some key questions you’ll want to ask the client during the first meeting:

Sujan Patel also has some great recommendations on questions to ask a new SEO client.

After the call, if I feel we’re a good match, I’ll send over my formal proposal and contract (thank you HelloSign for making this an easy process for me!).

To begin, I always like to offer my clients the first month as a trial period to make sure we vibe.

This gives both the client and I a chance to become friends first before dating. During this month, I’ll take my time to conduct an in-depth SEO audit.

These SEO audits can take me anywhere from 40 hours to 60 hours depending on the size of the website. These audits are bucketed into three separate parts and presented with Google Slides.

After that first month, if the client likes my work, we’ll begin implementing the recommendations from the SEO audit. And going forward, I’ll perform a mini-audit monthly and an in-depth audit quarterly.

To recap, I perform an SEO audit for my clients:

What You Need from a Client Before an SEO Audit

When a client and I start working together, I’ll share a Google doc with them requesting a list of passwords and vendors.

This includes:

Tools for SEO Audit

Tools needed for technical SEO audit:

Step 1: Add Site to DeepCrawl and Screaming Frog

Tools:

What to Look When Using DeepCrawl

The first thing I do is add my client’s site to DeepCrawl. Depending on the size of your client’s site, the crawl may take a day or two to get the results back.

Once you get your DeepCrawl results back, here are the things I look for:

Duplicate Content

Check out the “Duplicate Pages” report to locate duplicate content.

If duplicate content is identified, I’ll make this a top priority in my recommendations to the client to rewrite these pages and in the meantime, I’ll add the <meta name=”robots” content=”noindex, nofollow”> tag to the duplicate pages.

Common duplicate content errors you’ll discover:

How to fix:

Here’s an example of a duplicate content issue I had with a client of mine. As you can see below, they had URL parameters without the canonical tag.

These are the steps I took to fix the issue:

Pagination

There are two reports to check out:

In this example below, I was able to find that a client had reciprocal pagination tags using DeepCrawl:

How to fix:

Max Redirections

Review the “Max Redirections” report to see all the pages that redirect more than 4 times. John Mueller mentioned in 2015 that Google can stop following redirects if there are more than five.

While some people refer to these crawl errors as eating up the “crawl budget,” Gary Illyes refers to this as “host load”. It’s important to make sure your pages render properly because you want your host load to be used efficiently.

Here’s a brief overview of the response codes you might see:

How to fix:

What to Look For When Using Screaming Frog

The second thing I do when I get a new client site is to add their URL to Screaming Frog.

Depending on the size of your client’s site, I may configure the settings to crawl specific areas of the site at a time.

Here is what my Screaming Frog spider configurations look like:

You can do this in your spider settings or by excluding areas of the site.

Once you get your Screaming Frog results back, here are the things I look for:

Google Analytics Code

Screaming Frog can help you identify what pages are missing the Google Analytics code (UA-1234568-9). To find the missing Google Analytics code, follow these steps:

How to fix:

Google Tag Manager

Screaming Frog can also help you find out what pages are missing the Google Tag Manager snippet with similar steps:

How to fix:

You’ll also want to check if your client’s site is using schema markup on their site. Schema or structured data helps search engines understand what a page is on the site.

To check for schema markup in Screaming Frog, follow these steps:

You want to determine how many pages are being indexed for your client, follow this in Screaming Frog:

How to fix:

Google announced in 2016 that Chrome will start blocking Flash due to the slow page load times. So, if you’re doing an audit, you want to identify if your new client is using Flash or not.

To do this in Screaming Frog, try this:

How to fix:

Here’s an example of HTML5 code for adding a video:

![]() Javascript

Javascript

According to Google’s announcement in 2015, JavaScript is okay to use for your website as long as you’re not blocking anything in your robots.txt (we’ll dig into this deeper in a bit!). But, you still want to take a peek at how the Javascript is being delivered to your site.

How to fix:

Robots.txt

When you’re reviewing a robots.txt for the first time, you want to look to see if anything important is being blocked or disallowed.

For example, if you see this code:

Your client’s website is blocked from all web crawlers.

But, if you have something like Zappos robots.txt file, you should be good to go.

They are only blocking what they do not want web crawlers to locate. This content that is being blocked is not relevant or useful to the web crawler.

How to fix:

Crawl Errors

I use DeepCrawl, Screaming Frog, and Google and Bing webmaster tools to find and cross-check my client’s crawl errors.

To find your crawl errors in Screaming Frog, follow these steps:

How to fix:

Redirect Chains

Redirect chains not only cause poor user experience, but it slows down page speed, conversion rates drop, and any link love you may have received before is lost.

Fixing redirect chains is a quick win for any company.

How to fix:

Internal & External Links

When a user clicks on a link to your site and gets a 404 error, it’s not a good user experience.

And, it doesn’t help your search engines like any better either.

To find my broken internal and external links I use Integrity for Mac. You can also use Xenu Sleuth if you’re a PC user.

I’ll also show you how to find these internal and external links in Screaming Frog and DeepCrawl if you’re using that software.

How to fix:

Every time you take on a new client, you want to review their URL format. What am I looking for in the URLs?

How to fix:

Step 2: Review Google Search Console and Bing Webmaster Tools.

Tools:

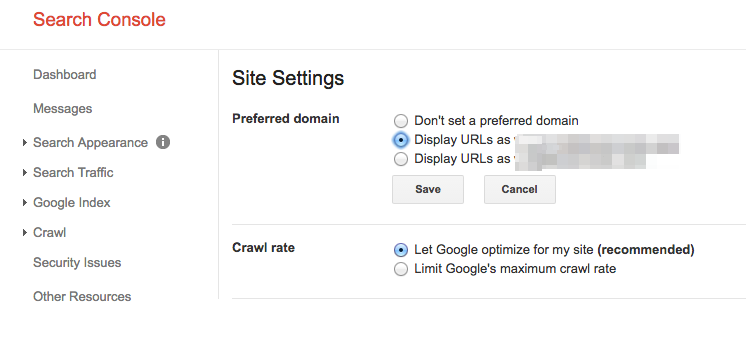

Set a Preferred Domain

Since the Panda update, it’s beneficial to clarify to the search engines the preferred domain. It also helps make sure all your links are giving one site the extra love instead of being spread across two sites.

How to fix:

With the announcement that Penguin is real-time, it’s vital that your client’s backlinks meet Google’s standards.

If you notice a large chunk of backlinks coming to your client’s site from one page on a website, you’ll want to take the necessary steps to clean it up, and FAST!

How to fix:

Here’s an example of what my disavow file looks like:

As an SEO consultant, it’s my job to start to learn the market landscape of my client. I need to know who their target audience is, what they are searching for, and how they are searching. To start, I take a look at the keyword search terms they are already getting traffic from.

Sitemaps are essential to get search engines to crawl your client’s website. It speaks their language. When creating sitemaps, there are a few things to know:

How to fix:

Crawl errors are important to check because it’s not only bad for the user but it’s bad for your website rankings. And, John Mueller stated that low crawl rate may be a sign of a low-quality site.

To check this in Google Search Console, go to ‘Coverage’ > ‘Details.’

To check this in Bing Webmaster Tools, go to ‘Reports & Data’ > ‘Crawl Information.’

How to fix:

Structured Data

As mentioned above in the schema section of Screaming Frog, you can review your client’s schema markup in Google Search Console.

Use the individual rich results status report in Google Search Console. (Note: The structured data report is no longer available).

This will help you determine what pages have structured data errors that you’ll need to fix down the road.

How to fix:

Step 3: Review Google Analytics

Tools:

When I first get a new client, I set up 3 different views in Google Analytics.

These different views give me the flexibility to make changes without affecting the data.

How to fix:

You want to make sure you add your IP address and your client’s IP address to the filters in Google Analytics so you don’t get any false traffic.

How to fix:

Tracking Code

You can manually check the source code, or you can use my Screaming Frog technique from above.

If the code is there, you’ll want to track that it’s firing real-time.

How to fix:

If you had a chance to play around in Google Search Console, you probably noticed the ‘Coverage’ section.

When I’m auditing a client, I’ll review their indexing in Google Search Console compared to Google Analytics. Here’s how:

How to fix:

Campaign Tagging

The last thing you’ll want to check in Google Analytics is if your client is using campaign tagging correctly. You don’t want to not get credit for the work you’re doing because you forgot about campaign tagging.

How to fix:

You can use Google Analytics to gain insight into potential keyword gems for your client. To find keywords in Google Analytics, follow these steps:

Step 4: Manual Check

Tools:

1 Version of Your Client’s Site is Searchable

Check all the different ways you could search for a website. For example:

As Highlander would say, “there can be only one” website that is searchable.

How to fix:

Conduct a manual search in Google and Bing to determine how many pages are being indexed by Google. This number isn’t always accurate with your Google Analytics and Google Search Console data, but it should give you a rough estimate.

To check, do the following:

How to fix:

I’ll run a quick check to see if the top pages are being cached by Google. Google uses these cached pages to connect your content with search queries.

To check if Google is caching your client’s pages, do this:

http://webcache.googleusercontent.com/search?q=cache:https://www.searchenginejournal.com/pubcon-day-3-women-in-digital-amazon-analytics/176005/

Make sure to toggle over to the ‘Text-only version.’

You can also check this in Wayback Machine.

How to fix:

While this may get a little technical for some, it’s vital to your SEO success to check the hosting software associated to your client’s website. Hosting can harm SEO and all your hard work will be for nothing.

You’ll need access to your client’s server to manually check any issues. The most common hosting issues I see are having the wrong TLD and slow site speed.

How to fix:

Image Credits

Featured Image: Paulo Bobita

All screenshots taken by author

This content was originally published here.